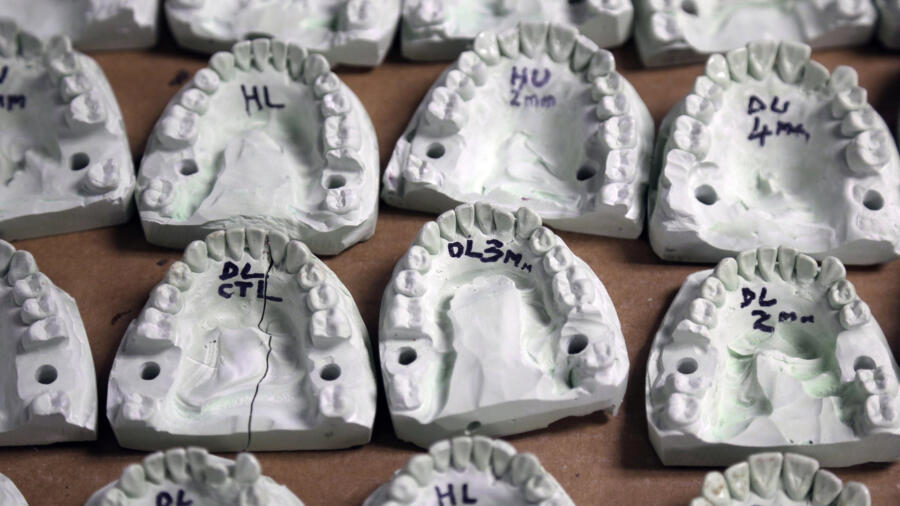

At the trial of Steven Mark Chaney, accused of the double-murder of a Dallas couple, nine witnesses backed up the construction worker’s alibi and told the jury that he couldn’t be the killer. But the prosecution had a more convincing witness: A forensic dentist who testified that a bite mark found on one of the victim’s arms was a “one in a million” match with Chaney’s teeth. Chaney was convicted of the killings and sentenced to life in prison in 1989.

But as Chaney endured more than a quarter-century behind bars, the scientific consensus around forensic odontology—the use of bite marks as evidence—began to erode.

In 2015, after research found little evidence for what had been a widely accepted forensic tool, Chaney was released. Even the dentist who had helped secure a conviction admitted his own analysis was junk science. And the Texas Forensic Science Commission last year recommended a moratorium on the use of bite-mark evidence.

Chaney’s case reflects a larger trend in forensic science in recent decades: rising doubts about previously rock-solid methods, raising serious concerns about the justice system’s ability to protect the innocent and punish the guilty.

And it isn’t just forensic odontology. Studies have cast doubts on ballistics evidence, hair and fiber-matching techniques, and even fingerprint analysis, a cornerstone of modern police work.

Two high-profile reports, published by the National Academy of Sciences in 2009 and the President’s Council of Advisors on Science and Technology in 2016, highlighted these problems and laid out a path toward needed reforms. Experts now worry that a recent Department of Justice decision to disband the National Commission on Forensic Science threatens to derail that progress.

Errors in forensic science can stem from shaky science, a tendency toward confirmation bias (or privileging data that supports an expert’s pre-existing theory), and the use of long-accepted practices that have simply never been subject to rigorous validity testing. Mistakes pose a great danger because the testimony of forensics experts carry so much weight in the courtroom, thanks to TV dramas that portray crime-scene investigators as unfailing and all-knowing.

Doubts about the validity of forensic-science techniques began in in the 1970s. That’s when the Law Enforcement Assistance Administration undertook a test of more than 200 forensic laboratories, sending them 21 blind samples for analysis. Error rates were as high as 71 percent—and in several of the samples, less than half the labs got the correct results. This could be a result of either invalid scientific tests or errors in processing samples, or both.

“People began taking a closer look at forensic science, and a lot of the critics began saying the emperor has no clothes,” says Ed Imwinkelried, an emeritus law professor at the University of California at Davis.

Another important milestone came in 1993, when the Supreme Court did away with the longstanding Frye rule, which stated that expert forensic testimony would be admissible to a trial if it was based on “generally accepted” practices. Instead, the high court created a more rigorous new benchmark known as the Daubert rule, requiring that forensic evidence must be based in empirically testable science.

The new standard arrived a decade after the arrival of DNA evidence, which was proving itself to be not only very accurate but also included something even more telling: probabilities of error. Because of the nature of DNA, an expert won’t simply declare whether two samples match or not, but calculate the probability of a match.

“The Supreme Court was saying, ‘That’s what we want,’ ” says Imwinkelried.

The court was pushing forensic science away from the dangers of overconfidence—and toward the same statistics-based certainty of DNA, Imwinkelried says. “A fingerprint expert used to come in and say, ‘I can say this is the defendant’s right thumb print to the exclusion of every other person on earth.”

But that has changed. “We have adjusted,” says Bill Schade, a latent-print examiner with 40 years of experience. “We no longer testify to absolute 100-percent certainty.”

“It used to be the death of your career to say, ‘I could be wrong,’ ” Now, he and other experts don’t just give a yes or no answer on fingerprint matches; they testify about the strength of their evidence. Statistics, likelihoods and probabilities have become big buzzwords in his profession.

Schade says forensics experts support the shift to a more cautious approach. “Nobody wants to be more correct than the people doing this work.”

The legal system now faces the challenge of applying the statistical rigor of DNA to other forensic disciplines, says Constantine Gatsonis, a professor of biostatistics at Brown University and co-chair of the committee that prepared the 2009 National Academy of Sciences report. While DNA analysis is digital, comprising discrete components that come in only four varieties, fingerprints, tire tracks and shell casings don’t break down so neatly, he says.

Analog patterns like fingerprints needed to be converted into digital information if they are to be analyzed more accurately, Gatsonis says. That will be possible with advances in pattern recognition and machine learning, to break down all the possible curves and whorls of fingerprints, for instance, into “high-dimensional data” that can be compared, he says.

In the meantime, DNA remains the gold standard for forensic evidence.

Read more: Cool New Ways Forensics Is Changing How Murders Are Solved